Hydraulic fracturing (AKA “fracking”) is in the news every day, and often in a disparaging way, despite the great benefits bestowed on nations applying the process, especially the US.

On a recent river cruise I found myself at a table with a couple from California, and the woman began spouting about the dangers and horribleness of fracking. My civility censor was suppressed by the wine I’d consumed, and I interrupted to say she was talking Bullshit. She halted, then asked her husband, a retired geologist, to comment, and he stated that fracking is a risky business. The geologist husband did not present any evidence for his view, IMO he was only speaking to support his spouse. I said I respected his opinion but still disagreed. The next day I apologized for my rudeness but said I still think she has been misled. We shared a congenial dinner later on, but avoided the subject.

The experience revealed I had been unprepared to engage on the details of the fracking issue. So this post is to summarize some research to assemble persuasive facts and resources to counter the fear mongering on this subject.

1.Obama’s EPA Found Fracking Has Not Contaminated Drinking Water

(Source: EPA Has Not Actually Changed Its Conclusion On Risks Of Fracking To Drinking Water by Robert Rapier for Forbes) Excerpts in italics with my bolds.

First, let me provide a bit of background on hydraulic fracturing. I find that most people who are against fracking don’t actually know what it is. The EPA report goes out of its way to blur the lines as well by lumping it all into “activities in the hydraulic fracturing water cycle.” By doing this, if a guy driving a truck filled with fracking chemicals has a wreck, it’s a “fracking issue.” So let’s define some terms.

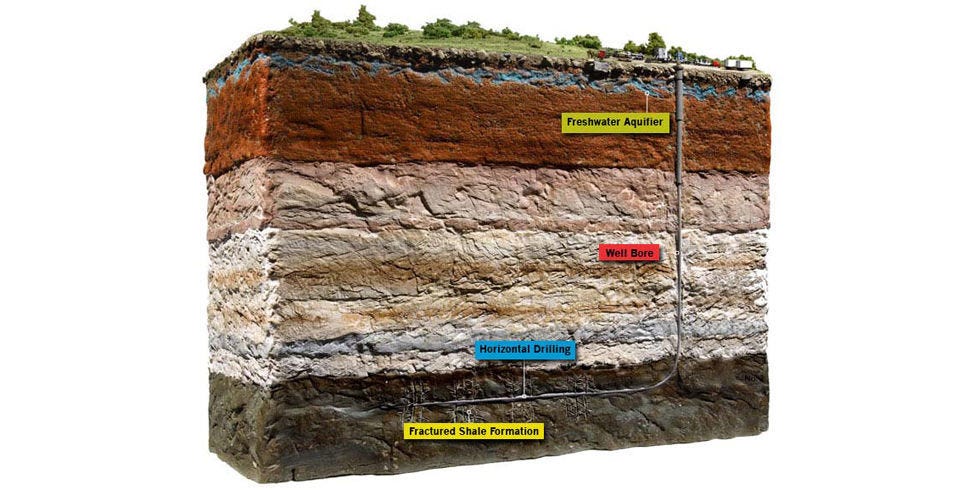

Hydraulic fracturing has been around since the late 1940s, and has now been used in the U.S. more than a million times to increase production from oil and gas wells. Fracking involves pumping water, chemicals and a proppant down an oil or gas well under high pressure to break open channels (fractures) in the reservoir rock trapping the deposit. Oil and gas do not travel easily through these some formations, which is why they need to be fractured. The proppant is a granular material (usually sand) designed to hold those channels open, allowing the oil (or natural gas) to flow to the well bore.

While fracking has been around for decades, two developments in recent years are responsible for thrusting the technique into the public eye. The first is the fairly recent development in which fracking was combined with horizontal drilling, another common technique used in the oil and gas industry.

While fracking has been around for decades, two developments in recent years are responsible for thrusting the technique into the public eye. The first is the fairly recent development in which fracking was combined with horizontal drilling, another common technique used in the oil and gas industry.

Like fracking, horizontal drilling was invented decades ago, and has been widely used in the oil and gas industry since the 1980s. As its name implies, horizontal drilling involves drilling down to an oil or gas deposit and then turning the drill horizontal to the formation to access a greater fraction of the deposit.

The marriage of these two techniques of hydraulic fracturing and horizontal drilling enabled the shale oil and gas boom in the U.S.

But the second development is what primarily thrust the technique(s) into the public spotlight. Some of the shale oil and gas formations are in areas that had never experienced significant fossil fuel development. Many locals resented this intrusion into their lives, and anti-fracking sentiments fed into a great deal of misinformation around the technique.

The movie Gasland is a perfect example. Director Josh Fox, whose family farm lies atop the Marcellus Shale in Pennsylvania, relied on misinformation and appeals to emotion instead of scientific data. Nevertheless, it was embraced by anti-fracking activists, and many who had never heard of fracking became convinced the technique was regularly polluting water supplies.

The concern among anti-fracking activists was that the fractures that allowed oil and gas to reach the well bore could also allow oil, gas, and chemicals to seep into the water supplies. But the reason this is a remote possibility is that a mile or more of rock will separate an oil and gas formation that is being fractured and an underground water resource. The fractures themselves extend for a few hundred feet, thus unsurprisingly there has never been a proven case where chemicals migrated from a fracked zone into water supplies.

That hasn’t stopped some from claiming that fracking has contaminated water supplies. However, those cases have always been a result of some activity peripheral to fracking. For example, if a well is improperly cemented it can leak. That in fact has happened, leading to the charge that “fracking contaminated the water.” There is an important distinction, however, and that is that this is not a result of the fracking process. A well may leak regardless of whether it was fracked. But activists (and now the EPA) seem bent on blurring the lines to the greatest extent possible by lumping lots of peripheral activities into the “fracking process.”

In 2010, Congress asked the EPA to investigate the safety of fracking. In 2015, the EPA issued a draft report. The bombshell statement from that report was that there was no evidence that fracking had “led to widespread, systemic impacts on drinking water resources in the United States.” This report was cheered by the fossil fuel industry, but caused a backlash with environmentalists, and spawned many counterclaims that the “fracking process” had led to contaminated water.

In December 2016 the EPA released its final report on the topic: Hydraulic Fracturing for Oil and Gas: Impacts from the Hydraulic Fracturing Water Cycle on Drinking Water Resources in the United States. Environmentalists were quick to note that the EPA had deleted its previous claim of no evidence of widespread water contamination, and were now reporting that “hydraulic fracturing activities can impact drinking water resources under some circumstances.” This story from The New York Times, for instance, was pretty typical of the reporting on the issue: Reversing Course, E.P.A. Says Fracking Can Contaminate Drinking Water.

But did the EPA actually reverse course? No. They gave examples where fracking could contaminate water. For instance they state that “Injection of hydraulic fracturing fluids directly into groundwater resources” can cause contamination. Yeah, no joke. Likewise, filling your car with gasoline can contaminate drinking water, because if you spill the gasoline all over the ground, it can get into the drinking water.

The EPA’s final report on hydraulic fracturing wasn’t that much different from the draft report. As in the previous report, the EPA noted that activities related to — but not exclusive to — fracking, have contaminated water supplies. Chemical spills happen all the time, but if the chemicals in question are for fracking, it becomes a “fracking issue.” Note that if the chemicals in question are to be used for fighting fires, we don’t say “firefighting contaminates water.” We should properly identify and address the actual problem, which in this instance would be the cause of the chemical spill.

Ultimately, the final report deleted a phrase from the draft report that there was no evidence of widespread impact on water supplies, and selectively used hypotheticals to show how fracking “could” contaminate water supplies. This is the Obama Administration laying down one more speed bump for the oil and gas industry while it still can.

2. Discredited Fracking Studies are used to Target School Children

(Source: New Activist Report Rehashes Discredited Fracking Studies to Target School Children by Seth Whitehead for EnergyinDepth

A new Environment America “report” uses a couple old anti-fracking tactics — exploitation of children and blatant misinformation from activist studies — to try to stoke fears and rally support for its extremist call to ban fracking nationwide.

The ominously-titled “Dangerous and Close: Fracking Puts the Nation’s Most Vulnerable People at Risk” finds there are nearly 2,000 child care facilities, better than 1,300 schools, nearly 250 nursing care providers and more than 100 hospitals within a one-mile radius of fracked wells in the nine states examined, stating:

“Given the scale and severity of fracking’s impacts, fracking should be prohibited wherever possible and existing wells should be shut down beginning with those near institutions that serve our most vulnerable populations.”

Here are the report’s most egregious claims, followed by the facts.

Environment America Claim: “Fracking creates a range of threats to our health, including creating toxic air pollution that can reduce lung function even among healthy people, trigger asthma attacks, and has been linked to premature death. Children and the elderly are especially vulnerable to fracking’s health risks.”

REALITY: There is actually ample evidence that fracking is improving overall air quality and health by reducing major pollutants such as fine particulate matter, sulfur dioxide and nitrogen dioxide. Furthermore, all three studies EA singles out as “evidence” close proximity to fracking sites can lead to the myriad of adverse health effects have been thoroughly debunked.

EA even cites an Earthworks study that claims “A series of 2012 measurements by officials of the Texas Commission on Environmental Quality (TCEQ) found VOCs levels so high at one fracking location that the officials themselves were forced to stop taking measurements and leave the site because it was too dangerous for them to remain.”

EA fails to mention TCEQ responded to Earthworks’ report by saying the agency has collected “several millions of data points for volatile organic compounds” in the Barnett Shale and Eagle Ford Shale and “Overall, the monitoring data provide evidence that shale play activity does not significantly impact air quality or pose a threat to human health.”

EA also conveniently ignores that the West Virginia Department of Environmental Protection (DEP) and the Colorado Department of Public Health (CDPH) have conducted air monitoring near well sites as well and found no credible risk to public health.

Environment America Claim: “Currently, oil and gas companies are exempt from key provisions in the Safe Drinking Water Act, the Clean Air Act, the Clean Water Act, and the Resource Conservation and Recovery Act.”

REALITY: The notion that the oil and natural gas industry is under-regulated is absolutely absurd narrative activists such as EA continue to push. Oil and gas production activities are subject to eight federal laws: including all relevant provisions of the Safe Drinking Water Act (SDWA); Clean Water Act (CWA); Clean Air Act (CAA); Resources Conservation and Recovery Act (RCRA); Comprehensive Environmental Response, Compensation, and Liability Act (CERCLA); the EPCRA; Toxic Substances Control Act (TSCA); and Federal Insecticide, Fungicide and Rodenticide Act (FIFRA). Additionally, the oil and gas production sector is also heavily regulated at the state level.

Environment America Claim: “Exposure to low levels of many of the chemicals used in or generated by oil and gas extraction activities can contribute to a variety of health effects, including asthma, cancer, birth defects, damage to the reproductive system and impaired brain development. For example, children’s long-term exposure to low levels of benzene, generally classified as a carcinogen, also harms respiratory health.”

REALITY: It is essential to understand that toxicity is completely dependent on dose level and exposure. The mere presence of benzene, for example, does not mean that it is present in toxic levels, as the numerous studies air monitoring studies referred to earlier illustrate. EA insinuates that even low-level benzene exposure is harmful. But benzene is actually present in countless everyday products such as shampoo, tooth paste, paint, PVC pipes and countless plastic products.

Environment America Claim: “Fracking targets the oil and gas trapped in shale formations… Sometimes that means wells are drilled in rural areas, such as portions of Colorado or North Dakota, and sometimes that wells are in densely populated areas, such as Los Angeles…”

REALITY: There are no fracking or unconventional oil production operations in the city of Los Angeles — none. EA attempts to justify this claim by employing the common activist tactic of expanding the definition of fracking to encompass all oil and gas related activity:

“Throughout this report, we refer to “fracking” as including all of the activities needed to bring a well into production using high-volume hydraulic fracturing. This includes drilling the well, operating that well, processing the gas or oil produced from that well, and delivering the gas or oil to market. The oil and gas industry often uses a more restrictive definition of “fracking” that includes only the actual moment in the extraction process when rock is fractured – a definition that obscures the broad changes to environmental, health and community conditions that result from the use of high-volume hydraulic fracturing in oil and gas extraction.”

Fracking is not used as a completion technique at any of the urban drill sites in the city. All of the facilities recover oil through traditional water flood operations. The report’s attempt to shoehorn fracking and unconventional production into its report proves that it is not engaged in an honest attempt to inform the public.

Environment America Claim: “Because of the health hazard created by radon, Pennsylvania has a long record of radon measurements in homes. An analysis of those radon measurements by researchers at Johns Hopkins School of Public Health found that radon levels have increased in counties with extensive fracking since 2004, and also found elevated radon levels on the first floor of houses located within 12.5 miles of a fracked well.”

REALITY: The Johns Hopkins study EA is referring to actually found the highest concentrations of radon were in areas with no shale development and direct sampling found radon not linked to fracking. As is the case with so many of the studies EA uses as evidence, the authors merely speculated fracking was the cause.

Environment America Claim: “Oil and gas production at fracked wells releases volatile organic compounds and nitrogen oxides that contribute to the formation of smog.”

REALITY: Oil and gas production is not a major contributor to ground-level ozone.

As EID has emphasized before, publicly available information demonstrates oil and gas production is not the significant contributor to ozone levels. Vehicle exhaust adds far more non-methane volatile organic compounds (NMVOCs) and nitrogen oxides (NOx) — both precursors to ground-level ozone — to the atmosphere than oil and gas production, as data from the EPA’s 2016 Greenhouse Gas Inventory clearly demonstrates.

Not only do oil and gas activities account for just six percent of total NOx emissions, which play more of a role in ground-level formation than VOCs, another recent NOAA report found that “The increased use of natural gas has…led to emissions reductions of NOx (40%) and SO2 (44%).”

Environment America Claim: “Contaminants can reach water supplies through faulty well construction, through surface spills, through improper wastewater disposal, or potentially through migration from the shale layer itself.”

REALITY: The EPA’s landmark five-year study confirmed, “hydraulic fracturing activities have not led to widespread, systemic impacts to drinking water resources,” and at least 15 other studies say the fracking process, specifically, have not contaminated groundwater.

Conclusion

EA’s claims in this report — aimed at generating headlines — are quite profound.

“Schools and day care centers should be safe places for kids to play and learn,” said Rachel Richardson, director of Environment America’s Stop Drilling program and co-author of the report. “Unfortunately our research shows far too many kids may be exposed to dirty air and toxic chemicals from fracking right next door.”

The problem is EA’s “research” merely found that there are some schools, nursing homes and hospitals near oil and natural gas development. It made no effort to collect its own data to support their claim that this is leading to adverse health effects.

Instead, it relied on long-debunked studies and tired fear tactics. Maybe that’s why the report’s hyperbolic claim that it “serves as a reminder of the unacceptable dangers of fracking, its potential to harm, and the need to bring this risky form of drilling to an end” was virtually ignored by the media.

3. Extensive research Study Found No link between groundwater pollution and fracking.

(Source: National Science Foundation and Duke University study summarized by Jeffrey Folks for American Thinker The science is settled, fracking is safe.)

Among the 130 wells studied, the researchers found only a subset of cases, including seven in Pennsylvania and one in Texas, in which faulty well construction or cementing was to blame for the seepage of gases into groundwater. According to Professor Avner Bengosh of Duke University, “[t]hese results appear to rule out the migration of methane up into drinking water aquifers from depth because of horizontal drilling or hydraulic fracturing.” That is to say, in the rare cases where it occurs, gases are entering the water supply from outside the borehead as a result of faulty well construction or poor cementing, both of which are manageable problems.

While the new report answers the most important question, proving beyond doubt that fracking itself does not cause gas to seep into the water supply, it does not address several other important questions. One of these is the frequency of contamination of water supplies by naturally occurring petroleum, methane, and other gases.

Natural pollution of this kind would seem to be extremely common, and in fact this natural process has been known for millennia. At sites where petroleum seeped to the surface, as in the vicinity of the 19th-century Drake oil field in Pennsylvania, Native Americans had made use of the oily substance as a lubricant for hundreds if not thousands of years. That oil, flowing naturally to the surface, was “contaminating” nearby streams and groundwater.

What humans add to natural emisions as a result of drilling is so minor as to be of little consequence. If some future study confirmed this fact, it would help to counter the myth that oil and gas drilling is polluting an otherwise pure land and sea environment. The reality is that wherever shale and other carbon-rich formations occur, natural leakage of petroleum and/or methane is inevitable. Oil and gas are naturally occurring features that are constantly interacting with the environment and entering the water supply through natural processes. As is so often the case, the idea that there once existed an environment free of all that modern intellectuals might consider unpleasant is simply a fantasy.

The NSF/Duke report is crucial to the debate over the safety of hydraulic fracturing. The oil and gas industry has already achieved a near perfect safety record, given the handful of failed wells in proportion to more than one million that have been fracked. The industry needs to continue working to achieve certainty that wells do not fail. It also needs to do a better job of communicating its intention to do so to a skeptical public.

4. Is Fracking Safe? The 10 Most Controversial Claims About Natural Gas Drilling by Seamus McGraw Popular Mechanics 2016

Members of Congress, gas companies, news organization, drilling opponents: They’ve all made bold claims about hydraulic fracturing (fracking) and the U.S. supply of underground natural gas. We take on 10 controversial quotes about natural gas and set the record straight.

WE ARE THE SAUDI ARABIA OF NATURAL GAS.” SEN. JOHN KERRY, D-MASS., MAY 2010

Less than a decade ago, industry analysts and government officials fretted that the United States was in danger of running out of gas. No more. Over the past several years, vast caches of natural gas trapped in deeply buried rock have been made accessible by advances in two key technologies: horizontal drilling, which allows vertical wells to turn and snake more than a mile sideways through the earth, and hydraulic fracturing, or fracking. Developed more than 60 years ago, fracking involves pumping millions of gallons of chemically treated water into deep shale formations at pressures of 9000 pounds per square inch or more. This fluid cracks the shale or widens existing cracks, freeing hydrocarbons to flow toward the well.

These advances have led to an eightfold increase in shale gas production over the past decade. According to the Energy Information Administration, shale gas will account for nearly half of the natural gas produced in the U.S. by 2035. But the bonanza is not without controversy, and nowhere, perhaps, has the dispute over fracking grown more heated than in the vicinity of the Marcellus Shale. According to Terry Engelder, a professor of geosciences at Penn State, the vast formation sprawling primarily beneath West Virginia, Pennsylvania and New York could produce an estimated 493 trillion cubic feet of gas over its 50- to 100-year life span. That’s nowhere close to Saudi Arabia’s total energy reserves, but it is enough to power every natural gas—burning device in the country for more than 20 years. The debate over the Marcellus Shale will shape national energy policy—including how fully, and at what cost, we exploit this vast resource.

HYDRAULIC FRACTURING SQUANDERS OUR PRECIOUS WATER RESOURCES.” Green Party of Pennsylvania, April 2011

There is no question that hydraulic fracturing uses a lot of water: It can take up to 7 million gallons to frack a single well, and at least 30 percent of that water is lost forever, after being trapped deep in the shale. And while there is some evidence that fracking has contributed to the depletion of water supplies in drought-stricken Texas, a study by Carnegie Mellon University indicates the Marcellus region has plenty of water and, in most cases, an adequate system to regulate its usage. The amount of water required to drill all 2916 of the Marcellus wells permitted in Pennsylvania in the first 11 months of 2010 would equal the amount of drinking water used by just one city, Pittsburgh, during the same period, says environmental engineering professor Jeanne VanBriesen, the study’s lead author. Plus, she notes, water withdrawals of this new industry are taking the place of water once used by industries, like steel manufacturing, that the state has lost. Hydrogeologist David Yoxtheimer of Penn State’s Marcellus Center for Outreach and Research gives the withdrawals more context: Of the 9.5 billion gallons of water used daily in Pennsylvania, natural gas development consumes 1.9 million gallons a day (mgd); livestock use 62 mgd; mining, 96 mgd; and industry, 770 mgd.

“NATURAL GAS IS CLEANER, CHEAPER, DOMESTIC, AND IT’S VIABLE NOW.” OILMAN TURNED NATURAL-GAS CHEERLEADER T. BOONE PICKENS, SEPTEMBER 2009

Burning natural gas is cleaner than oil or gasoline, and it emits half as much carbon dioxide, less than one-third the nitrogen oxides, and 1 percent as much sulfur oxides as coal combustion. But not all shale gas makes it to the fuel tank or power plant. The methane that escapes during the drilling process, and later as the fuel is shipped via pipelines, is a significant greenhouse gas. At least one scientist, Robert Howarth at Cornell University, has calculated that methane losses could be as high as 8 percent. Industry officials concede that they could be losing anywhere between 1 and 3 percent. Some of those leaks can be prevented by aggressively sealing condensers, pipelines and wellheads. But there’s another upstream factor to consider: Drilling is an energy-intensive business. It relies on diesel engines and generators running around the clock to power rigs, and heavy trucks making hundreds of trips to drill sites before a well is completed. Those in the industry say there’s a solution at hand to lower emissions—using natural gas itself to power the process. So far, however, few companies have done that.

“[THERE’S] NEVER BEEN ONE CASE—DOCUMENTED CASE—OF GROUNDWATER CONTAMINATION IN THE HISTORY OF THE THOUSANDS AND THOUSANDS OF HYDRAULIC FRACTURING [WELLS]” SEN. JAMES INHOFE, R-OKLA., APRIL 2011

The senator is incorrect. In the past two years alone, a series of surface spills, including two blowouts at wells operated by Chesapeake Energy and EOG Resources and a spill of 8000 gallons of fracking fluid at a site in Dimock, Pa., have contaminated groundwater in the Marcellus Shale region. But the idea stressed by fracking critics that deep-injected fluids will migrate into groundwater is mostly false. Basic geology prevents such contamination from starting below ground. A fracture caused by the drilling process would have to extend through the several thousand feet of rock that separate deep shale gas deposits from freshwater aquifers. According to geologist Gary Lash of the State University of New York at Fredonia, the intervening layers of rock have distinct mechanical properties that would prevent the fissures from expanding a mile or more toward the surface. It would be like stacking a dozen bricks on top of each other, he says, and expecting a crack in the bottom brick to extend all the way to the top one. What’s more, the fracking fluid itself, thickened with additives, is too dense to ascend upward through such a channel. EPA officials are closely watching one place for evidence otherwise: tiny Pavillion, Wyo., a remote town of 160 where high levels of chemicals linked to fracking have been found in groundwater supplies. Pavillion’s aquifer sits several hundred feet above the gas cache, far closer than aquifers atop other gas fields. If the investigation documents the first case of fracking fluid seeping into groundwater directly from gas wells, drillers may be forced to abandon shallow deposits—which wouldn’t affect Marcellus wells.

“THE GAS ERA IS COMING, AND THE LANDSCAPE NORTH AND WEST OF [NEW YORK CITY] WILL INEVITABLY BE TRANSFORMED AS A RESULT. WHEN THE VALVES START OPENING NEXT YEAR, A LOT OF POOR FARM FOLK MAY BECOME TEXAS RICH. AND A LOT OF OTHER PEOPLE—ESPECIALLY THE ECOSENSITIVE NEW YORK CITY CROWD THAT HAS SETTLED AMONG THEM—WILL BE APOPLECTIC AS THEIR PRISTINE WEEKEND SANCTUARY IS CONVERTED INTO AN INDUSTRIAL ZONE, CRISSCROSSED WITH DRILL PADS, PIPELINES, AND ACCESS ROADS.” New York magazine, Sept. 21, 2008

Much of the political opposition to fracking has focused on the Catskill region, headwaters of the Delaware River and the source of most of New York City’s drinking water. But the expected boom never happened—there’s not enough gas in the watershed to make drilling worthwhile. “No one has to get excited about contaminated New York City drinking water,” Penn State’s Engelder told the Times Herald-Record of Middletown, N.Y., in April. The shale is so close to the surface that it’s not concentrated in large enough quantities to make recovering it economically feasible. But just to the west, natural gas development is dramatically changing the landscape. Drilling rigs are running around the clock in western Pennsylvania. Though buoyed by the economic windfall, residents fear that regulators can’t keep up with the pace of development. “It’s going to be hard to freeze-frame and say, ‘Let’s slow down,’?” Sen. Robert P. Casey Jr., D-Pa., said last fall. “That makes it more difficult for folks like us, who say we want to create the jobs and opportunity in the new industry, but we don’t want to do it at the expense of water quality and quality of life.”

“NATURAL GAS IS AFFORDABLE, ABUNDANT AND AMERICAN. IT COSTS ONE-THIRD LESS TO FILL UP WITH NATURAL GAS THAN TRADITIONAL GASOLINE.” REP. JOHN LARSON, D-CONN., CO-SPONSOR OF H.R. 1380, A MEASURE THAT WOULD PROVIDE TAX INCENTIVES FOR THE DEVELOPMENT AND PURCHASE OF NATURAL GAS VEHICLES, MARCH 2011

That may be true. Plus, there’s another incentive: Vehicles powered by liquefied natural gas, propane or compressed natural gas run cleaner than cars with either gasoline or diesel in the tank. According to the Department of Energy, if the transportation sector switched to natural gas, it would cut the nation’s carbon-monoxide emissions by at least 90 percent, carbon-dioxide emissions by 25 and nitrogen-oxide emissions by up to 60. But it’s not realistic: Nationwide, there are only about 3500 service stations (out of 120,000) that offer natural gas—based automotive fuel, and it would cost billions of dollars and take years to develop sufficient infrastructure to make that fuel competitive with gasoline or diesel. And only Honda makes a car that can run on natural gas. That doesn’t mean natural gas has no role in meeting the nation’s short-term transportation needs. In fact, buses in several cities now rely on it, getting around the lack of widespread refueling opportunities by returning to a central terminal for a fill-up. The same could be done for local truck fleets. But perhaps the biggest contribution natural gas could make to America’s transportation picture would be more indirect—as a fuel for electric-generation plants that will power the increasingly popular plug-in hybrid vehicles.

“DO NOT DRINK THIS WATER” HANDWRITTEN SIGN IN THE DOCUMENTARY GASLAND, 2010

It’s an iconic image, captured in the 2010 Academy Award—nominated documentary GasLand. A Colorado man holds a flame to his kitchen faucet and turns on the water. The pipes rattle and hiss, and suddenly a ball of fire erupts. It appears a damning indictment of the gas drilling nearby. But Colorado officials determined the gas wells weren’t to blame; instead, the homeowner’s own water well had been drilled into a naturally occurring pocket of methane. Nonetheless, up to 50 layers of natural gas can occur between the surface and deep shale formations, and methane from these shallow deposits has intruded on groundwater near fracking sites. In May, Pennsylvania officials fined Chesapeake Energy $1 million for contaminating the water supplies of 16 families in Bradford County. Because the company had not properly cemented its boreholes, gas migrated up along the outside of the well, between the rock and steel casing, into aquifers. The problem can be corrected by using stronger cement and processing casings to create a better bond, ensuring an impermeable seal.

“AS NEW YORK GEARS UP FOR A MASSIVE EXPANSION OF GAS DRILLING IN THE MARCELLUS SHALE, STATE OFFICIALS HAVE MADE A POTENTIALLY TROUBLING DISCOVERY ABOUT THE WASTEWATER CREATED BY THE PROCESS: IT’S RADIOACTIVE.” ProPublica, November 2009

Shale has a radioactive signature—from uranium isotopes such as radium-226 and radium-228—that geologists and drillers often measure to chart the vast underground formations. The higher the radiation levels, the greater the likelihood those deposits will yield significant amounts of gas. But that does not necessarily mean the radioactivity poses a public health hazard; after all, some homes in Pennsylvania and New York have been built directly on Marcellus shale. Tests conducted earlier this year in Pennsylvania waterways that had received treated water—both produced water (the fracking fluid that returns to the surface) and brine (naturally occurring water that contains radioactive elements, as well as other toxins and heavy metals from the shale)—found no evidence of elevated radiation levels. Conrad Dan Volz, former scientific director of the Center for Healthy Environments and Communities at the University of Pittsburgh, is a vocal critic of the speed with which the Marcellus is being developed—but even he says that radioactivity is probably one of the least pressing issues. “If I were to bet on this, I’d bet that it’s not going to be a problem,” he says.

“CLAIMING THAT THE INFORMATION IS PROPRIETARY, DRILLING COMPANIES HAVE STILL NOT COME OUT AND FULLY DISCLOSED WHAT FRACKING FLUID IS MADE OF.” Vanity Fair, June 2010

Under mounting pressure, companies such as Schlumberger and Range Resources have posted the chemical compounds used in some of their wells, and in June, Texas became the first state to pass a law requiring full public disclosure. This greater transparency has revealed some oddly benign ingredients, such as instant coffee and walnut shells—but also some known and suspected carcinogens, including benzene and methanol. Even if these chemicals can be found under kitchen sinks, as industry points out, they’re poured down wells in much greater volumes: about 5000 gallons of additives for every 1 million gallons of water and sand. A more pressing question is what to do with this fluid once it rises back to the surface. In Texas’s Barnett Shale, wastewater can be reinjected into impermeable rock 1.5 miles below ground. This isn’t feasible in the Marcellus Shale region; the underlying rocks are not porous enough. Currently, a handful of facilities in Pennsylvania are approved to treat the wastewater. More plants, purpose-built for the task, are planned. In the meantime, most companies now recycle this water to drill their next well.

“THE INCREASING ABUNDANCE OF CHEAP NATURAL GAS, COUPLED WITH RISING DEMAND FOR THE FUEL FROM CHINA AND THE FALL-OUT FROM THE FUKUSHIMA NUCLEAR DISASTER IN JAPAN, MAY HAVE SET THE STAGE FOR A ‘GOLDEN AGE OF GAS.” WALL STREET JOURNAL SUMMARIZING AN INTERNATIONAL ENERGY AGENCY REPORT, JUNE 6, 2011

There’s little question that the United States, with 110 years’ worth of natural gas (at the 2009 rate of consumption), is destined to play a major role in the fuel’s development. But even its most ardent supporters, men like T. Boone Pickens, concede that it should be a bridge fuel between more polluting fossil fuels and cleaner, renewable energy. In the meantime, the U.S. should continue to invest in solar and wind, conserve power and implement energy-efficient technology. Whether we can effectively manage our natural gas resource while developing next-gen sources remains to be seen. Margie Tatro, director of fuel and water systems at Sandia National Laboratories, says, “I think natural gas is a transitioning fuel for the electricity sector until we can get a greater percentage of nuclear and renewables on the grid.”

5.Compendium of Studies Demonstrating the Safety and Health Benefits of Fracking

The United States has made massive improvements in air quality over the past decade

and study after study has shown that the increased use of natural gas for electricity

generation – made possible by the shale revolution – is the reason we’ve achieved this

feat.

This progress is the centerpiece of Energy In Depth’s new report – Compendium of

Studies Demonstrating the Safety and Health Benefits of Fracking – which includes data

from 23 peer-reviewed studies, 17 government health and regulatory agencies and

reports from 10 research institutions that clearly demonstrate:

• Increased natural gas use — thanks to hydraulic fracturing —has led to dramatic

declines in air pollution. The United States is the number one oil and gas producer in

the world and it has some of the lowest death rates from air pollution in the world.

Numerous studies have shown that pollution has plummeted as natural gas production

has soared.

• Emissions from well sites and associated infrastructure are below thresholds

regulatory authorities consider to be a threat to public health – that’s the conclusion of

multiple studies using air monitors that measure emissions directly.

• There is no credible evidence that fracking causes or exacerbates asthma. In fact,

asthma rates and asthma hospitalizations across the United States have declined as

natural gas production has ramped up.

• There is no credible evidence that fracking causes cancer. Studies that have directly

measured emissions at fracking sites have found emissions are below the threshold

that would be harmful to public health.

• There is no credible evidence that fracking leads to adverse birth outcomes. In fact,

adverse birth outcomes have decreased while life expectancy has increased in areas

that are ramping up natural gas use.

• Fracking is not a credible threat to groundwater. Study after study has shown that

there are no widespread, systemic impacts to drinking water from hydraulic fracturing.

It is well known that the shale revolution has been a boon to our nation’s economy,

its geopolitical position, and the millions of consumers and manufacturers who

continue to benefit from historically low energy costs. But the case in support of

shale’s salubrious effect on air quality and health continues to be an underreported

phenomenon – this new report puts the health benefits of our increased use of natural

gas in the spotlight.

Conclusion

To be clear, no form of energy development, whether we’re talking about fossil fuels or

renewables, is risk free. But the data clearly show, time and time again, that emissions

from fracking are not a credible risk to public health.

In fact, the data show that enormous reductions in pollution across the board are

attributable to the significant increases in natural gas consumption that hydraulic

fracturing has made possible.

They show power plant emissions of SO2 declining by 86 percent, emissions of NOx

declining by 67 percent, and emissions of mercury by 55 percent. They also show

hospitalizations for asthma declining as natural gas ramps up. At the same time life

expectancy and birth outcomes have improved.

And, of course, all these positive health outcomes can be largely traced back to

significantly cleaner air, thanks to fracking.

via Science Matters

June 13, 2018 at 06:57PM