From the NORWEGIAN UNIVERSITY OF SCIENCE AND TECHNOLOGY and the “playing with climate models for doom outcomes is too stupid to be science” department.

The WUWT “artistic model” for this paper.

Envisioning a future where all the trees in Europe disappear

Using climate models to take a deeper look at the regional effects of global warming

Vegetation plays an important role in shaping local climate: just think of the cool shade provided by a forest or the grinding heat of the open desert.

But what happens when widespread changes, caused by or in response to global warming, take place across larger areas? Global climate models allow researchers to play out these kinds of thought experiments. The answers that result can serve as a warning or a guide to help policymakers make future land use decisions.

With this as a backdrop, a team of researchers from the Norwegian University of Science and Technology and Justus-Liebig University Giessen in Germany decided to use a regional climate model to see what would happen if land use in Europe changed radically. They looked what would happen with air temperature, precipitation, and temperature extremes if Europe were completely deforested to either bare land or just ground vegetation. They also considered what might happen if Europe’s cropland were converted to either evergreen or deciduous forests.

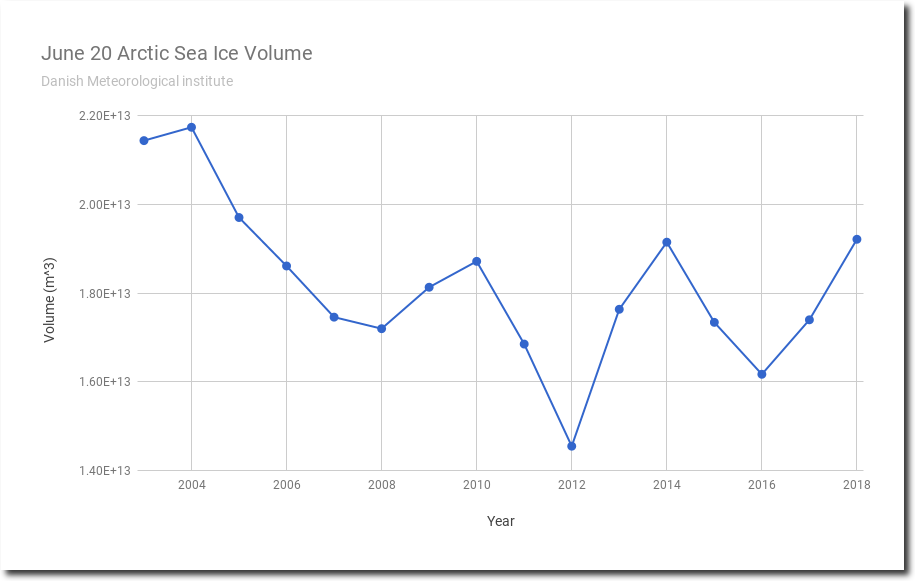

The researchers knew that climate change impacts tend to be underestimated at a regional level, “because the projected global mean temperature changes are dampened by averaging over the oceans, and are much smaller than the expected regional effects over most land areas,” the team wrote in their paper, recently published in Environmental Research Letters. “This applies to both mean and extreme effects, as changes in regional extremes can be greater than those in global mean temperature up to a factor of three.”

“We wanted to perform a quantitative analysis of how much land cover changes can affect local climate. Important transitions in the land use management sector are envisioned in near future, and we felt important to benchmark the temperature response to extreme land cover changes”said Francesco Cherubini, a professor in NTNU’s Industrial Ecology Programme, and first author of the study. “Decisions regarding land uses are frequently taken at a subnational level by regional authorities, and regional projections of temperature and precipitation effects of land cover changes can help to maximize possible synergies of climate mitigation and adaptation policies, from the local to the global scale.”

Future extreme land use changes are not as improbable as you might think. As the global population continues to grow, more land will come under pressure to produce food.

Alternatively, demand for crops for biofuels could also drive what kind of vegetation is cultivated and where.

One future vision of what the world might look like, called Shared Socio-economic Pathways, estimates that global forest areas could change from about – 500 million hectares up to + 1000 million hectares in 2100, with between 200 and 1500 million hectares of land needed to grow bioenergy crops. In fact, the higher end of this range could be realized under the most ambitious climate change mitigation targets.

Changes in land use can have a complicated effect on local and regional temperatures.

When the ground cover is altered, it changes how much water is retained by the soil or lost to evaporation. It can also affect how much sunlight the ground reflects, which scientists call albedo.

The researchers knew that other studies had shown contradictory effects, particularly from deforestation. Some showed that deforestation reduced air temperatures near the ground surface, and increased daily temperature extremes and number of hot days in the summer. Other studies found increases in the occurrence of hot dry summers.

But when the researchers ran their model to see what would happen if land was deforested, they found a slight annual cooling over the region overall, but big differences locally.

Their model showed that when forests were replaced by bare land, the temperatures cooled by just -0.06 ? regionally. The cooling was slightly greater (-0.13? regionally) if the researchers assumed that forests were replaced by herbaceous vegetation. In some locations, cooling can exceed average values of -1 C.

On their own, these regional changes may not seem like much. But when the researchers looked more closely at how these changes were distributed across the region, they found that there was a cooling in the northern and eastern part of the region, and a warming effect in western and central Europe. They also found that deforestation led to increased summer temperature extremes.

“Regional cooling from deforestation might look counter-intuitive, but it is the outcome of the interplay among many different physical processes. For example, trees tend to mask land surface and increase the amount of solar energy that is not reflected back to the space but it is kept in the biosphere to warm the climate,” said Bo Huang, a postdoc in the Industrial Ecology Programme who was one of the paper’s co-authors. “This particularly applies to areas affected by seasonal snow cover, because open land areas covered by snow are much more reflective than snow-covered forested land.”

The researchers found an annual average cooling across the whole of Europe, but with a clear latitudinal trend and seasonal variability. Despite the average cooling effects, they found that deforestation tends to increase local temperatures in summer, and increase the frequency of extreme hot events.

When the researchers ran their model to see what would happen if cropland was replaced by either evergreen or deciduous forests, they found a general warming in large areas of Europe, with a mean regional warming of 0.15 ? when the transition was to evergreen forests and 0.13 ? if the transition was to deciduous forests.

Much as in the deforestation thought experiment, the researchers found that the changes were stronger at a local scale, as much as 0.9 °C in some places. And the magnitude and significance of the warming gradually increased at high latitudes and in the eastern part of the region. Areas in western Europe actually showed a slight cooling.

Cherubini says that understanding how regional vegetation changes play out at more local levels is important as decision makers consider land management policies to mitigate or adapt to climate change.

“It is important to increase our knowledge of land-climate interactions, because many of our chances to achieve low-temperature stabilization targets are heavily dependent on how we manage our land resources,” Cherubini said. “We need more research to further validate and improve the resolution of regional climate change projections, since they are instrumental to the design and implement the best land management strategies in light of climate change mitigation or adaptation.”

###

Here’s the paper: http://iopscience.iop.org/article/10.1088/1748-9326/aac794/meta

via Watts Up With That?

June 21, 2018 at 10:21AM

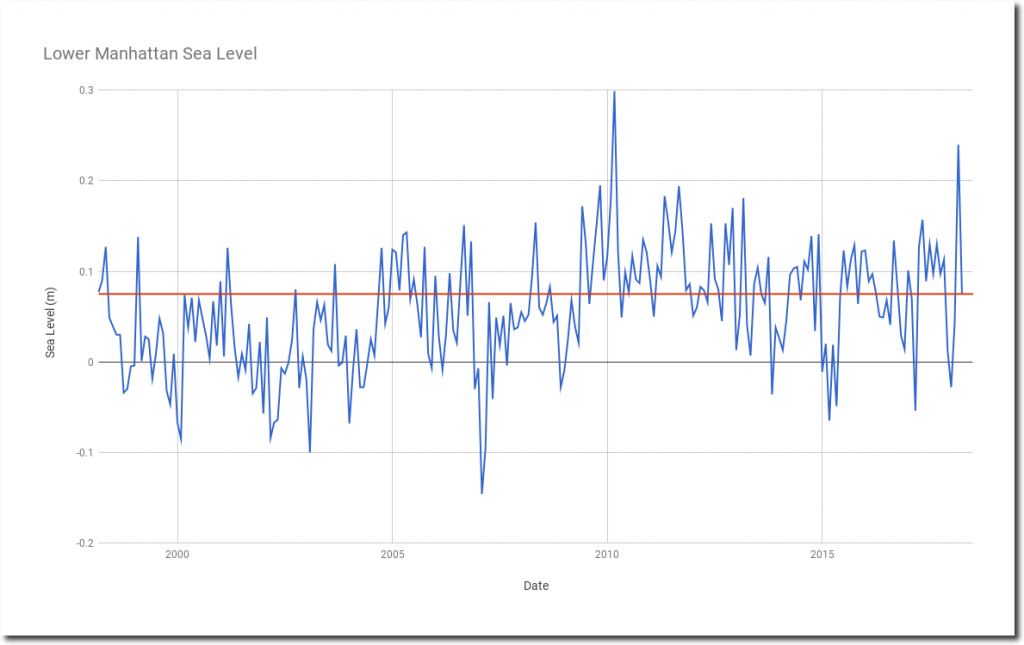

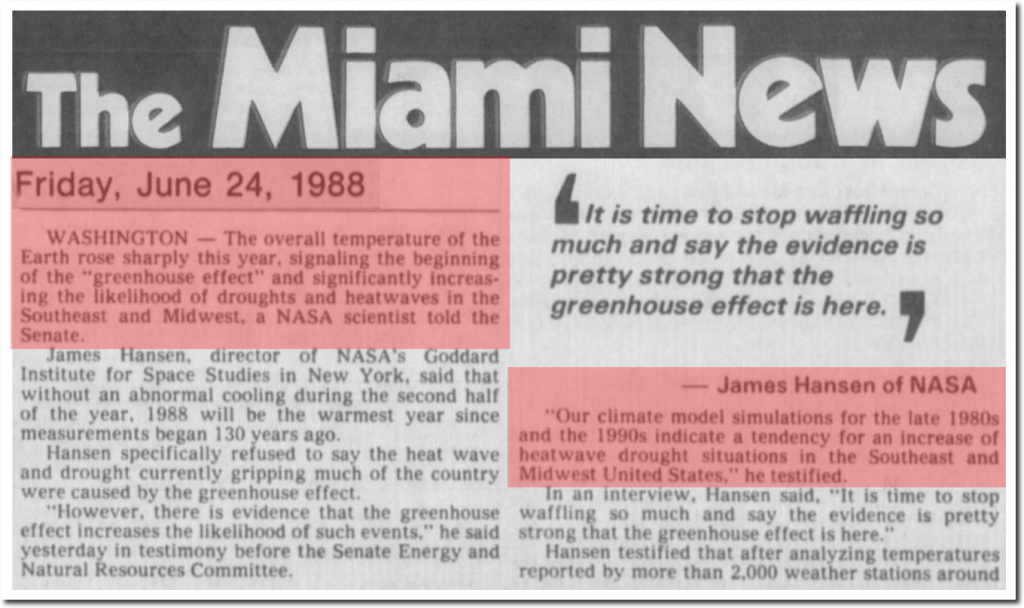

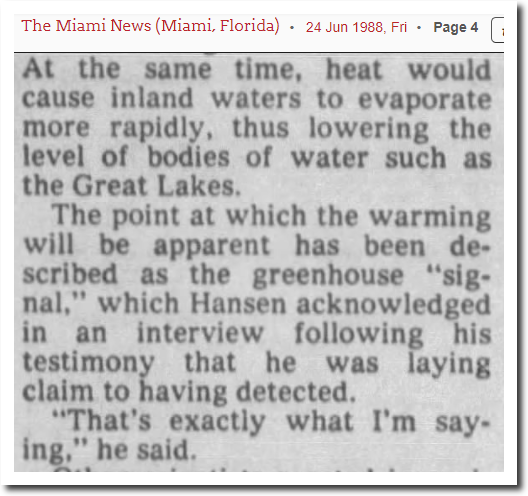

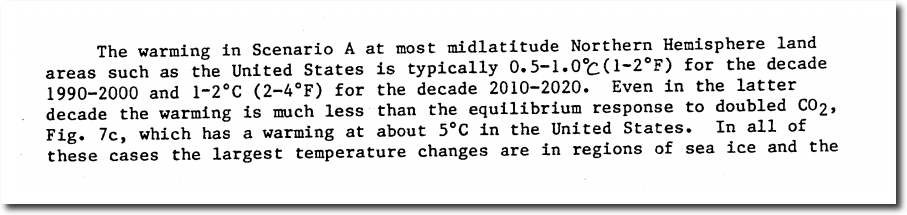

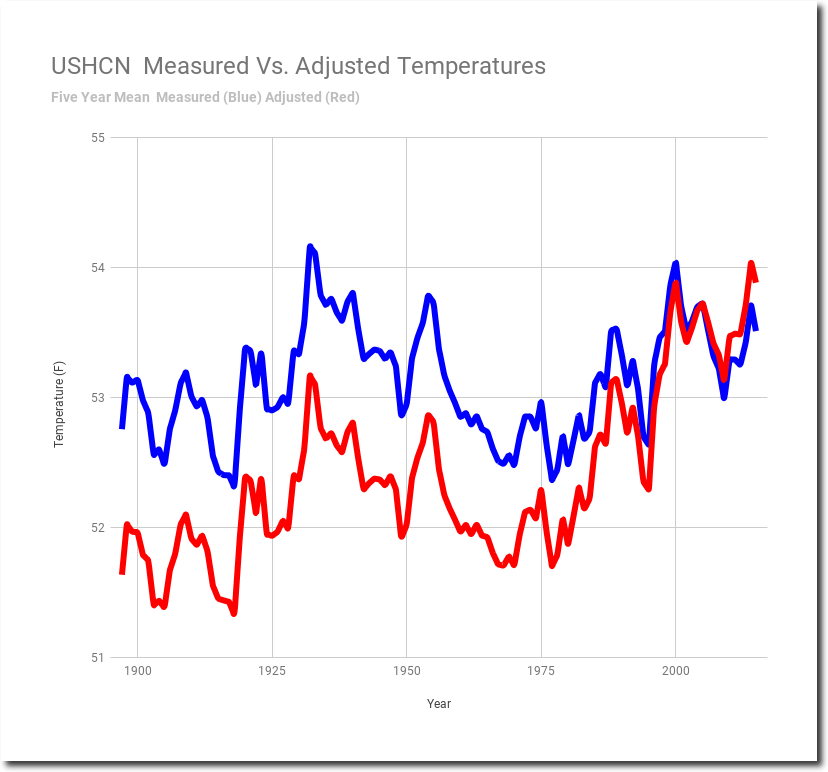

It is not just you thinking the world is not warming the way climate models predicted. The models are flawed, and their estimates of the climate’s future response to rising CO2 are way too hot. Yet these overcooked forecasts are the basis for policy makers to consider all kinds of climate impacts, from sea level rise to food production and outbreaks of Acne.

It is not just you thinking the world is not warming the way climate models predicted. The models are flawed, and their estimates of the climate’s future response to rising CO2 are way too hot. Yet these overcooked forecasts are the basis for policy makers to consider all kinds of climate impacts, from sea level rise to food production and outbreaks of Acne.