You may have heard claims recently that the ocean is now “boiling”. Fortunately, a world expert in ocean heat uptake provides a deep dive into oceanic temperature history, thereby putting that fear to rest.

Geoffrey Gebbie of Woods Hole Oceanographic Institution has published an highly informative study Combining Modern and Paleoceanographic Perspectives on Ocean Heat Uptake in Annual Review of Marine Science (2021). H/T Kenneth Richard. Below are the main findings, along with some excerpts in italics with my bolds.explaining some oceanography for the rest of us.

The large climatic shifts that started with the melting of the great ice sheets have

involved significant ocean heat uptake that was sustained over centuries and millennia,

and modern-ocean heat content changes are small by comparison.

Abstract

Monitoring Earth’s energy imbalance requires monitoring changes in the heat content of the ocean. Recent observational estimates indicate that ocean heat uptake is accelerating in the twenty-first century. Examination of estimates of ocean heat uptake over the industrial era, the Common Era of the last 2,000 years, and the period since the Last Glacial Maximum, 20,000 years ago, permits a wide perspective on modern-day warming rates. In addition, this longer-term focus illustrates how the dynamics of the deep ocean and the cryosphere were active in the past and are still active today. The large climatic shifts that started with the melting of the great ice sheets have involved significant ocean heat uptake that was sustained over centuries and millennia, and modern-ocean heat content changes are small by comparison.

Objective

This review seeks to put the most recent ocean heat uptake estimates of 0.5–0.7 W m−2 into the context of longer (multidecadal to millennial) timescales. Such timescales put a wider perspective on present-day heat uptake. In addition, the dynamics of these longer timescales may still have some expression today. This research direction leads to the long temperature time series of paleoceanographic proxies that predate the instrumental record. Ocean heat uptake over the last deglaciation (∼20,000–10,000 years ago) and the Common Era (previous two millennia) will serve as examples to explore the longer-timescale dynamics of ocean heat uptake.

Common Era Evolution of Mean Ocean Temperature

The Ocean2k global-mean SST compilation is derived from 57 marine proxy records that, in aggregate, show a statistically significant cooling trend from 700 to 1700 CE over the MCA–LIA transition. The data compilation contains a time series of 200-year averages that have been nondimensionalized. Here, we dimensionalize the values with the recommended values of McGregor et al. (2015) to obtain temperature anomalies, and the inferred global-mean surface cooling over the MCA–LIA transition is near the high end of the expected 0.4–0.6°C range (Figure 4a).

Figure 4 The Common Era. (a) The evolution of Ocean2k SST (blue circles, with σ/2 error bars) and mean ocean temperature, , as inferred from noble-gas measurements (red circles, with σ/2 error bars), the Gebbie & Huybers (2019) Common Era inversion (red line), and a power-law estimate (black line, with 2σ error shown in gray), referenced to global-mean SST in 1870. (b,c) Average ocean heat uptake over a running 50-year interval (panel b) and a 500-year interval (panel c) plotted from the Gebbie & Huybers (2019) inversion (red line) and a power-law estimate (black line, with 1σ error shown in gray). Heat uptake is expressed in terms of an equivalent planetary energy imbalance. Abbreviation: SST, sea-surface temperature.

One realization of the Common Era was produced by an inversion that attempted to reconstruct the three-dimensional evolution of oceanic temperature anomalies over the last 2,000 years (Gebbie & Huybers 2019). The inversion fits an empirical ocean circulation model to modern-day tracer observations, historical temperature observations from the HMS Challenger expedition of 1872–1876 (Murray 1895), and the global-mean Ocean2k SST. The resulting ocean temperature evolution is dominated by the propagation of surface climate anomalies from the MCA and LIA into the subsurface ocean, where the propagation is coherent for several centuries (red line in Figure 4a). Although the Gebbie & Huybers (2019) inversion was not constrained with oceanic power laws, the resulting mean ocean temperature is consistent with a power-law estimate over the Common Era.

Early-twenty-first-century SST may already be warmer than MCA SST, but it is

less likely that modern mean ocean temperature has surpassed MCA values.

From the Gebbie & Huybers (2019) inversion, it was inferred that the MCA ocean stored 1,000 ZJ more than the ocean of the year 2000, and that the ∼500 ZJ of heat uptake during the modern warming era is just one-third of what is required to reach MCA levels. Amplification of the high-latitude SST signal relative to the global mean can produce a greater MCA–LIA mean ocean cooling, which explains the greater MCA heat content relative to the present day. When considering the range of Common Era scenarios consistent with a power law, however, some cases are admitted where the MCA and the present day have similar oceanic heat content.

Deep-Ocean Heat Uptake During Modern Warming

Figure 6 Ocean heat uptake below 2,000-m depth, in terms of a planetary energy imbalance, for 50-year averages given by Zanna et al. (2019) (blue line), Gebbie & Huybers (2019) (red line), and the power-law estimate from this review (black line, with 2σ error in gray). An observational estimate (purple, with 2σ error bar) for 1990–2010 is also included (Purkey & Johnson 2010).

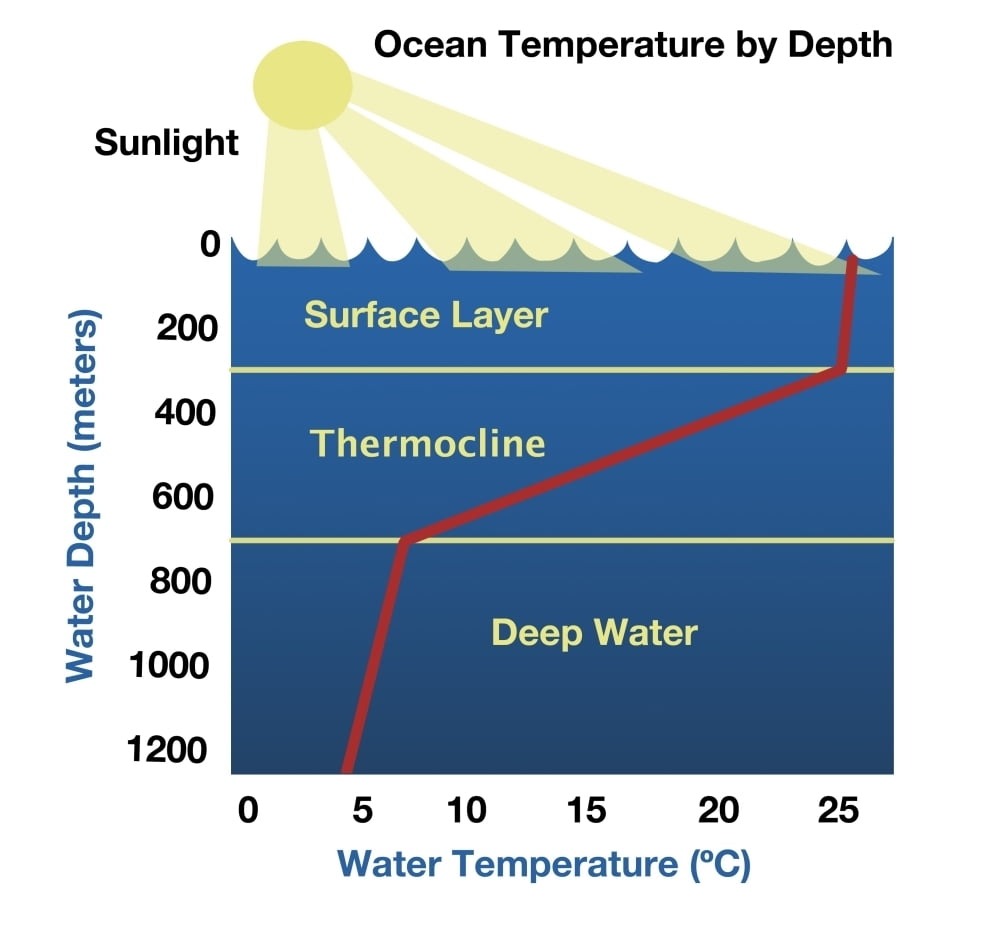

The confidence in upper-ocean heat content during the modern warming era starkly contrasts with the remaining uncertainties in heat content below 2,000-m depth (Figure 6). Observational estimates have indicated a deep-ocean heat uptake of 68 ± 61 mW m−2 (2σ) when differencing hydrographic sections between 1990 and 2010 (Purkey & Johnson 2010, Desbruyères et al. 2017). Estimation of deep-ocean heat uptake over the entire instrumental era relies to a greater extent on circulation models. Simulations of modern warming that are initialized from equilibrium in 1870 suggest that heat penetrates downward (Gregory 2000) and that average deep-ocean heat uptake is small over 50-year time intervals (Zanna et al. 2019). These estimates would not capture ongoing trends from the earlier Common Era, if any existed. An inversion that accounts for the LIA found a deep-ocean heat loss of 80 mW m−2 early in the modern warming era (Gebbie & Huybers 2019), and our power-law estimate suggests that an even greater cooling is possible, although the uncertainties are large. These discrepancies highlight the ongoing effect that Common Era variability could play in the modern-day ocean. Unfortunately, recent observations do not appear to be sufficient to distinguish between these scenarios, as they all suggest a weak deep-ocean heat uptake in the early twenty-first century.

Deep-ocean cooling could exist as the result of

disequilibrium between the upper and deep ocean.

Oceanic disequilibrium exists at a range of spatial and temporal scales, from local, short-term variability to longer-term changes that are anticipated to generally have greater spatial extent. Oceanic disequilibrium has been anticipated as a result of the 1815 Tambora (Stenchikov et al. 2009) and 1883 Krakatoa (Gleckler et al. 2006) volcanic eruptions and their lingering effects on energy imbalance. More generally, ocean disequilibrium can result from the differing adjustment times of the interior ocean to surface forcing, where the deep-ocean response may take longer than 1,000 years (e.g., Wunsch & Heimbach 2008). Accordingly, some influence of changes in surface climate over the last millennium is potentially present today. The most isolated waters of the mid-depth Pacific, for example, should still be adjusting to the MCA–LIA transition. In this scenario, these deep waters are cooling, but they are anomalously warm due to the residual influence of the MCA.

The degree to which the ocean’s long memory affects today’s ocean is uncertain due to difficulties in integrating state-of-the-art circulation models over the entire Common Era. An accurate assessment may also require a model that can skillfully predict ocean circulation changes in both the past and the future. The climate history of the Common Era should also be better constrained by recovering additional observations, such as historical subsurface temperature observations and paleoceanographic data. Proper inference of climate sensitivity depends on the past oceanic heat uptake, which this review suggests is tied to the long timescale of deep-ocean dynamics.

Do notice the scale on the left axis. As though we can measure the whole ocean (71% of earth surface) to 0.05 C. It’s a formula converting zettajoules to temp change.

via Science Matters

September 5, 2023 at 06:10PM