It has only taken ten years, that is how long a few of us have been detailing major problems with how the Australian Bureau of Meteorology measures daily temperatures. Now, I’m informed, the Bureau are ditching the current system and looking to adopt an overseas model that it claims will be more reliable.

There will be no media release.

There was no media release when the Bureau ditched its rainfall forecasting system (POAMA) once described as state of the art, and quietly adopted ACCESS-S1 based on the UK Met Office GloSea5-GC2. (As though the British are any better at accurate rainfall and snowfall forecasts.)

Until yesterday the Bureau has claimed one-second spot temperature readings from its custom-designed resistance probes, did not need to be numerically average; something overseas bureaus routinely do in an attempt to achieve consistency with measurements from the more inert traditional mercury thermometers.

Consistency across long temperature series is, of course, critical to accurately assessing climate variability and change.

The Australian Bureau has long claimed numerical averaging is not necessary because its ‘thick’ probe design exactly mimicked a mercury thermometer.

Then this design was phased out and replaced with the ‘slimline’. Still no inter-comparison studies.

I welcome the switch to ‘the overseas model’ if this means that the Bureau will begin numerical averaging of spot readings from its resistance probes in accordance with World Meteorological Organisation recommendations.

But the problem of reliable temperature measurements doesn’t begin or end with numerical averaging.

The Bureau, and the Met Office in the UK, have been tinkering with how they measure temperatures since the transition from mercury thermometers to resistance probes began in the 1990s. Not only with how they average – or not, but with probe design, and also with power supply.

It is important to understand that resistance probes hooked-up to data-loggers measure temperature as a change in electrical resistance across a piece of platinum. And, this is the important bit, the voltage delivered to the probe is critical for accurate temperature measurement. Not just in Australia, but around the world. And there are no standards.

When using a traditional mercury thermometer, temperature is read from a scale along a glass tube that shows changes in the thermal expansion of that liquid, which happens to be mercury. The mercury thermometer was once the world standard.

The new, automated, and potentially more precise method for measuring temperatures via platinum resistance, is reliable in controlled environments; satellites that are measuring temperatures at different depths within the atmosphere use these resistance probes. But it gets much more complicated when trying to measure temperatures on Earth, and especially at busy places like airports, which have become a primary site for the automated electronic weather systems using platinum resistance probes from which global average temperatures are now derived.

At airports, the electrical system relied upon to measure temperatures very precisely, must be insulated from other electrical systems including radar and even chatter between a pilot wanting to land his jumbo and the control tower.

The electronics now used to measure climate change, are not only susceptible to electrical interference at these airports, but also changes in voltage that can be caused by something as simple as turning on and off runway lights – at dusk and dawn.

To know how reliable, the new system is, we need the parallel data not just for Australia, but for overseas airports including Heathrow and Cochin – in India, the world’s first airport fully powered by solar energy.

To know that warming globally has not, at least in part, been caused by a move to resistance probes, we need to see the inter-comparison data showing the equivalent temperature measurements from mercury thermometers at the same place and on the same day.

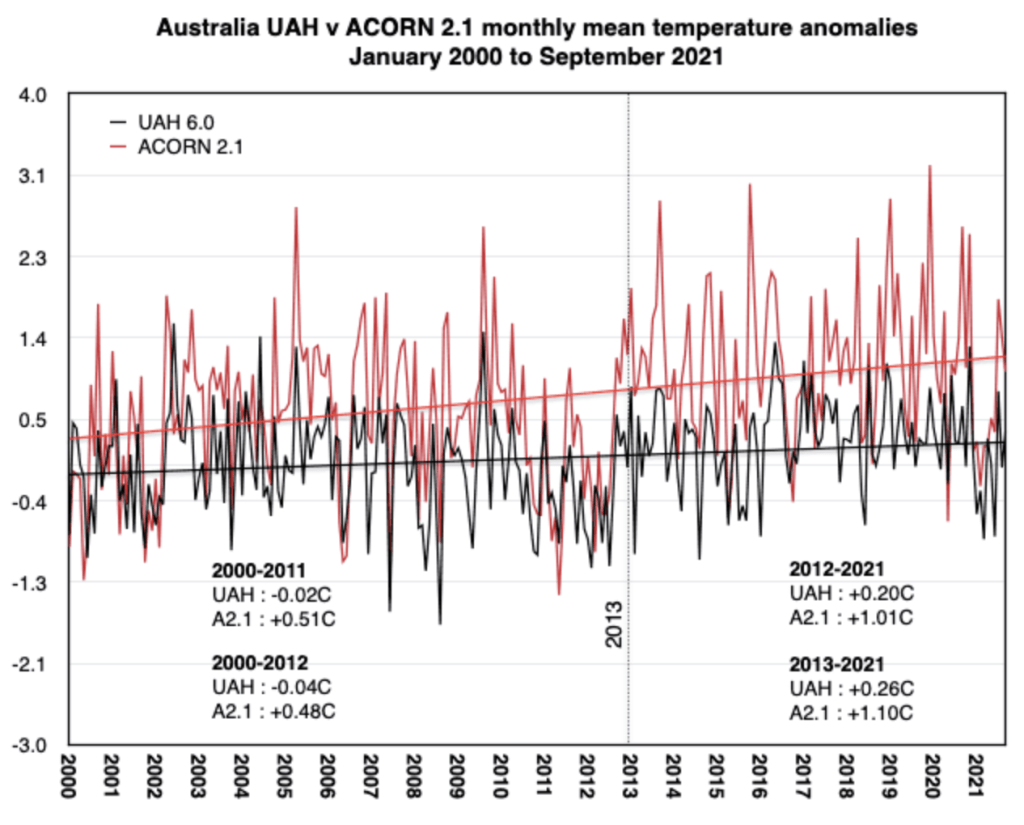

I’m reliably informed by a past Bureau employee that upgrading power supplies in 2012 caused a 0.3-to-0.5-degree Celsius increase across about 30 percent of the Australian network. (That would get us some way to the 1.5 degree Celsius tipping point, even if we closed down every coal-fired power stations.)

Perth-based researcher Chris Gillham documented this uptick in Australian temperatures in correspondence to me last October, and as an abrupt change in the difference between the Bureau’s monthly mean temperature as reported from ACORN-SAT and the satellite data for Australian as measured by the University of Alabama Huntsville.

The step-up in warming in the official data for the entire Australian continent is also noted in peer-review publications by climate scientists including Sophie Lewis and David Karoly. I have written to Sophie Lewis about the problems in relying on Bureau data. But instead of attributing the change to equipment and voltage, Lewis, Karoly and other climate scientists ascribe it to anthropogenic greenhouse warming.

Because university climate scientists ignore my correspondence, and rely entire on advice from the Bureau’s current management, they could not know otherwise. The Bureau current management refuse to report this change documented by its technicians and communicated to me unofficially by retired former managers.

A relevant question: why did the Bureau’s Chief Executive Andrew Johnson not report the 0.3-to-0.5-degree Celsius increase across about 30 percent of the Australian network (caused entirely by a change to the power supply, not air temperatures) in its 2013-14 Bureau of Meteorology Annual Report to Federal Parliament?

In that same report Johnson does comment on the infrastructure upgrades that caused the artificial warming in the official temperature data.

Meanwhile, the Bureau’s management, including Johnson, continues to lament the need for all Australians to work towards keeping temperatures below a 1.5 degrees Celsius tipping point. Just today we are told to expect another hike in the price of electricity because of the need to transition to renewables including solar.

The Bureau continues to support a transition to renewable energy, without explaining the potential effect, even on the reliability of its temperature measurements. As the Bureau has not explained the effect of the transition to resistance probes more generally. (I’ve characterised the Ayers and Warne papers as fake in a 6-part jokers series, republished by WattsUpWithThat.)

An overseas colleague has explained how something as simple as applying a 100Hz frequency to a power circuit to extend the life of a battery – necessary with solar systems – can cause maximum temperatures to drift up on sunny days. To be clear, as the voltage increased the recorded temperature increased additional to any actual change in air temperature!

Go to the NASA page about temperature measuring and you will see a picture of someone atop a mountain in Montana and a solar panel. That solar panel will be supplying to a battery that will not only provide the voltage used to measure electrical resistance across the platinum wire, but also for the periodic upload of that same temperature data to a satellite.

Since the transition to the resistance probes that use voltage to measure temperature, problems at remote locations from mountains to lighthouses have included aging batteries – solar powered of course – but unable to provide sufficient current at critical times.

I’ve been shown data from such a remote location, were minimum temperatures reliably drop 2 degrees Celsius on the hour, at the same time every hour through the night, as the battery is drained with each satellite upload of temperature data.

This is the same temperature data that is being used in Australia, and around the world, to justify extreme economic and social intervention in the name of stopping climate change.

IN SUMMARY

In the 1990s, not just in Australia, but around the world, there was a fundamental change in the equipment and methods used to measure temperatures.

This created a discontinuity in the long temperatures series that begin around 1880, and that are used by the IPCC to measure climate variability and change.

By neither the IPCC, nor NASA, nor the UK Met Office have documented the effect of this change.

I’ve been asking the Australian Bureau, that provides data into the global databases, how they know that temperature measurements from the resistance probes at places like Cape Otway lighthouse are consistent with readings from mercury thermometers. (I’ve written extensively about how temperatures are measured at this lighthouse, including as part 3 of my 8-part series about hyping daily maximum temperatures.)

Now I ask, how can the bureau know that NASA and the UK Met Office are reliably measuring temperatures if they have not seen the US and UK parallel temperature datasets?

The parallel data are the recordings from the mercury thermometers measuring at the same location and the same place as the resistance probes. This data will give some indication of the extent of the many discontinuities created in the record by the change over to probes. I have estimated that the bureau is holding parallel data for approximately 38 Australian locations with on average 15 years of data.

When John Abbot first lodged a Freedom of Information request for some of this data for Brisbane Airport back in 2019, he was told that the parallel data did not exist.

Abbot took the issue to the Australian Information Commissioner, who sided with the bureau falsely confirming that it did not exist.

It was only after an appearance at the Administrative Appeal Tribunal in Brisbane on 3rd February, where I attended as an expert witness, and the drawn-out mediation process that followed, that three years of Brisbane Airport parallel data was finally made available.

As Graham Lloyd explained on the front page of the Weekend Australia thereafter, my analysis of this data shows that the resistance probes at Brisbane Airport measure temperatures that are quite different from the mercury thermometer most of the time. The Bureau has been neither able to confirm, nor deny, the statistical significance of the difference. But it doesn’t dispute the actual numbers.

John Abbot recently lodged another FOI for more parallel data for Brisbane Airport. This time around the bureau has acknowledged the existence of the data and even that some of the ‘field books’ have already been scanned, and so are available in an electronic form. But the bureau claims it will only be able to release another three years of data for this one site (Brisbane Airport), again this time – never mind the 15 years of parallel data that exists for Brisbane Airport and another 37 locations that vary geographically and electrically. We need this comparative data, including to assess the reliability of the current global warming forecasts.

I’ve been reliably informed, the Bureau is intent on drawing out provision of this parallel data to Abbot and me for Australia, while changing the ‘model’ it is using to measure temperatures as though the overseas systems are reliable.

So, I ask, again, where are these numbers for overseas locations, including the parallel data for the overseas mountains and airports – not to mention lighthouses?

It is critical for everyone to be able to see this data, especially if the Australian bureau is to adopt an overseas model for temperature measuring on the basis it must be more reliable.

*****

The feature image shows me at the Goulburn Airport weather station in late July 2017. This weather stations was shown to have had a limit set on how cold temperatures could be recorded for a period of 20 years until Lance Pidgeon and I blew the whistle on the fiasco.

via Jennifer Marohasy

May 25, 2023 at 08:03PM